Posts by Amr Elmeleegy

Development & Optimization

Mar 18, 2025

Introducing NVIDIA Dynamo, A Low-Latency Distributed Inference Framework for Scaling Reasoning AI Models

NVIDIA announced the release of NVIDIA Dynamo today at GTC 2025. NVIDIA Dynamo is a high-throughput, low-latency open-source inference serving framework for...

14 MIN READ

Data Center / Cloud

Dec 05, 2024

Spotlight: Perplexity AI Serves 400 Million Search Queries a Month Using NVIDIA Inference Stack

The demand for AI-enabled services continues to grow rapidly, placing increasing pressure on IT and infrastructure teams. These teams are tasked with...

7 MIN READ

Data Center / Cloud

Nov 21, 2024

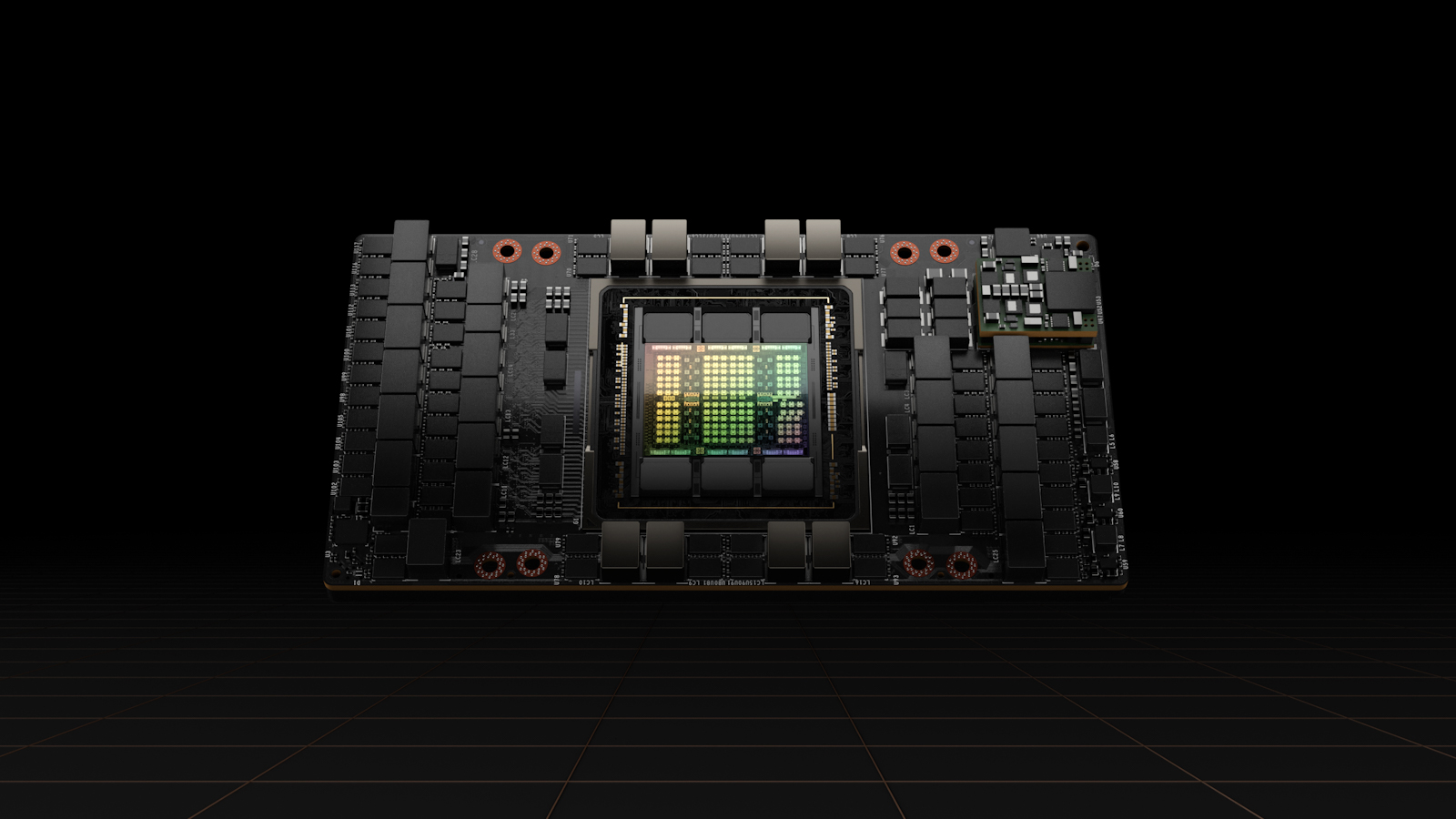

NVIDIA TensorRT-LLM Multiblock Attention Boosts Throughput by More Than 3x for Long Sequence Lengths on NVIDIA HGX H200

Generative AI models are advancing rapidly. Every generation of models comes with a larger number of parameters and longer context windows. The Llama 2 series...

5 MIN READ

Data Center / Cloud

Nov 15, 2024

Streamlining AI Inference Performance and Deployment with NVIDIA TensorRT-LLM Chunked Prefill

In this blog post, we take a closer look at chunked prefill, a feature of NVIDIA TensorRT-LLM that increases GPU utilization and simplifies the deployment...

4 MIN READ

Generative AI

Nov 08, 2024

5x Faster Time to First Token with NVIDIA TensorRT-LLM KV Cache Early Reuse

In our previous blog post, we demonstrated how reusing the key-value (KV) cache by offloading it to CPU memory can accelerate time to first token (TTFT) by up...

5 MIN READ

Generative AI

Nov 01, 2024

3x Faster AllReduce with NVSwitch and TensorRT-LLM MultiShot

Deploying generative AI workloads in production environments where user numbers can fluctuate from hundreds to hundreds of thousands – and where input...

5 MIN READ