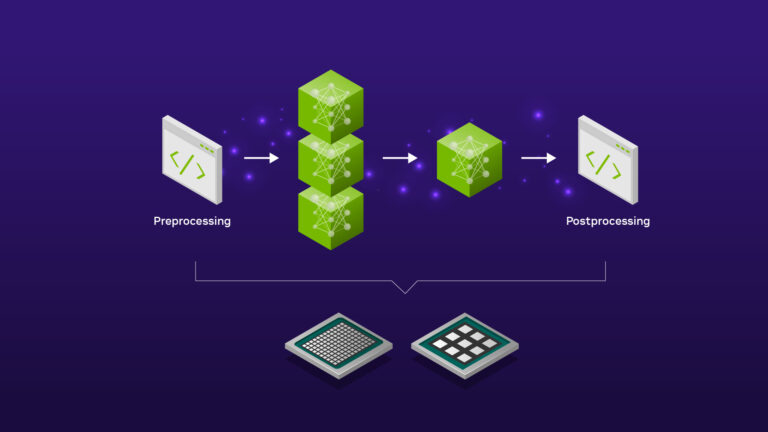

As of 3/18/25, NVIDIA Triton Inference Server is now NVIDIA Dynamo. In many production-level machine learning (ML) applications, inference is not limited to running a forward pass on a single ML model. Instead, a pipeline of ML models often needs to be executed. Take, for example, a conversational AI pipeline that consists of three modules: an automatic speech recognition (ASR) module to��

]]>