A primary goal in the field of neuroscience is understanding how the brain controls movement. By improving pose estimation, neurobiologists can more precisely quantify natural movement and in turn, better understand the neural activity that drives it. This enhances scientists�� ability to characterize animal intelligence, social interaction, and health.

]]>

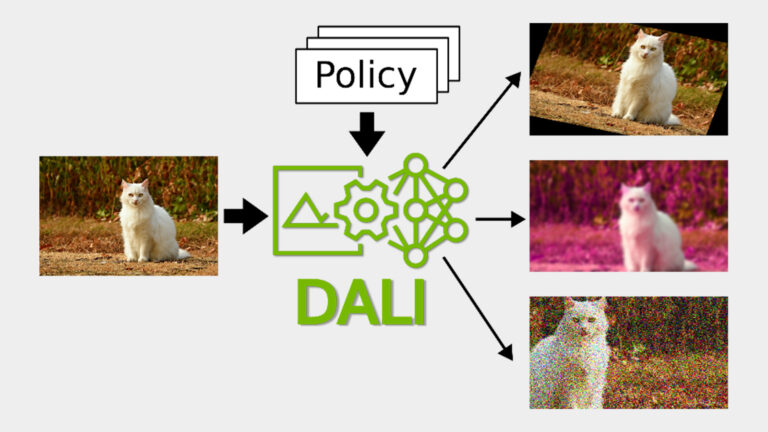

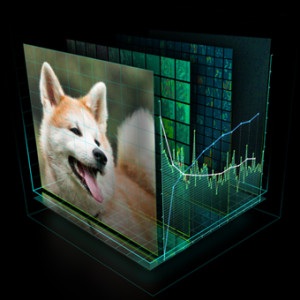

Deep learning models require hundreds of gigabytes of data to generalize well on unseen samples. Data augmentation helps by increasing the variability of examples in datasets. The traditional approach to data augmentation dates to statistical learning when the choice of augmentation relied on the domain knowledge, skill, and intuition of the engineers that set up the model training.

]]>

Deep learning models require vast amounts of data to produce accurate predictions, and this need becomes more acute every day as models grow in size and complexity. Even large datasets, such as the well-known ImageNet with more than a million images, are not sufficient to achieve state-of-the-art results in modern computer vision tasks. For this purpose, data augmentation techniques are��

]]>

In MLPerf HPC v1.0, NVIDIA-powered systems won four of five new industry metrics focused on AI performance in HPC. As an industry-wide AI consortium, MLPerf HPC evaluates a suite of performance benchmarks covering a range of widely used AI workloads. In this round, NVIDIA delivered 5x better results for CosmoFlow, and 7x more performance on DeepCAM, compared to strong scaling results from��

]]>

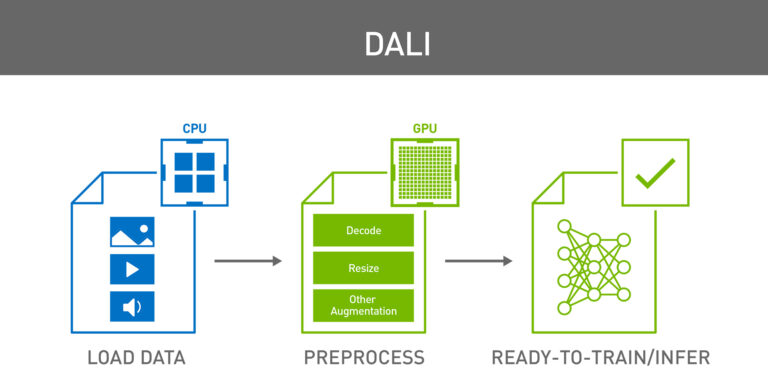

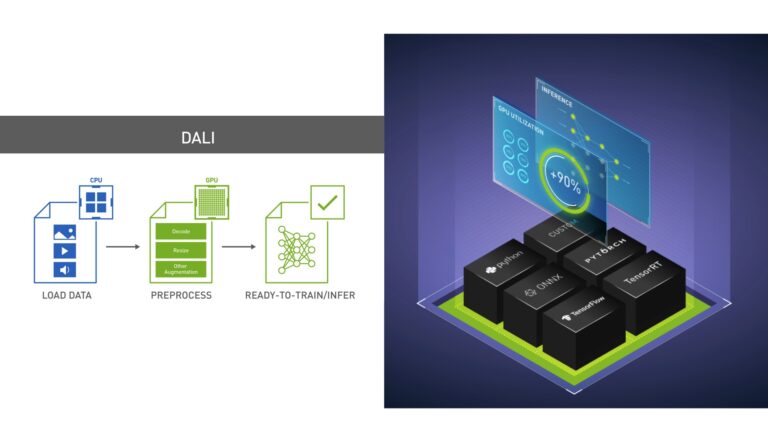

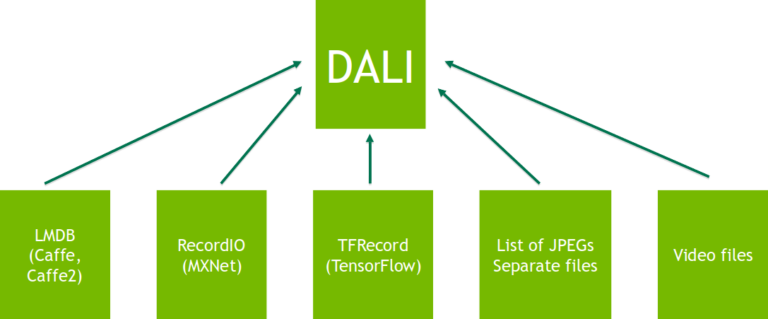

This post is an update to an older post. Deep learning models require training with vast amounts of data to achieve accurate results. Raw data usually cannot be directly fed into a neural network due to various reasons such as different storage formats, compression, data format and size, and limited amount of high-quality data. Addressing these issues requires extensive data preparation��

]]>

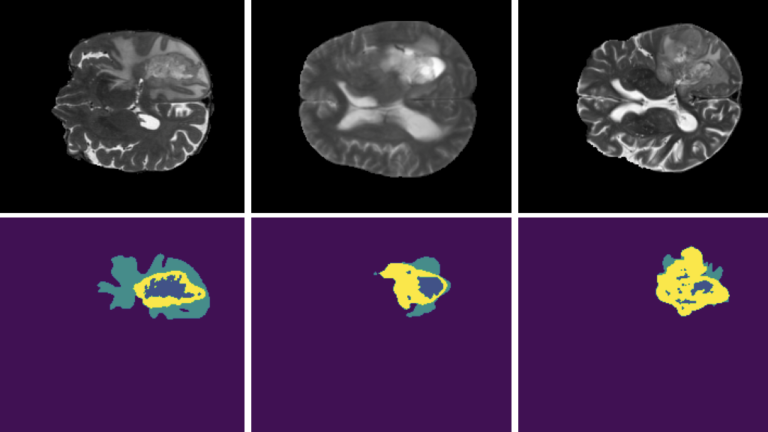

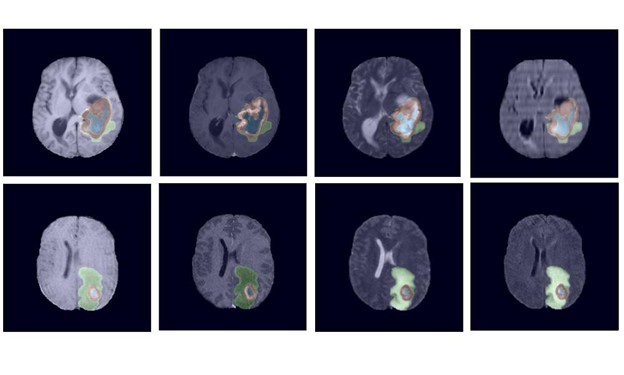

NVIDIA data scientists this week took three of the top 10 spots in a brain tumor segmentation challenge validation phase at the prestigious MICCAI 2021 medical imaging conference. Now in its tenth year, the BraTS challenge tasked applicants with submitting state-of-the-art AI models for segmenting heterogeneous brain glioblastomas sub-regions in multi-parametric magnetic resonance imaging��

]]>

Join the NVIDIA Triton and NVIDIA TensorRT community to stay current on the latest product updates, bug fixes, content, best practices, and more. When you are working on optimizing inference scenarios for the best performance, you may underestimate the effect of data preprocessing. These are the operations required before forwarding an input sample through the model. This post highlights the��

]]>

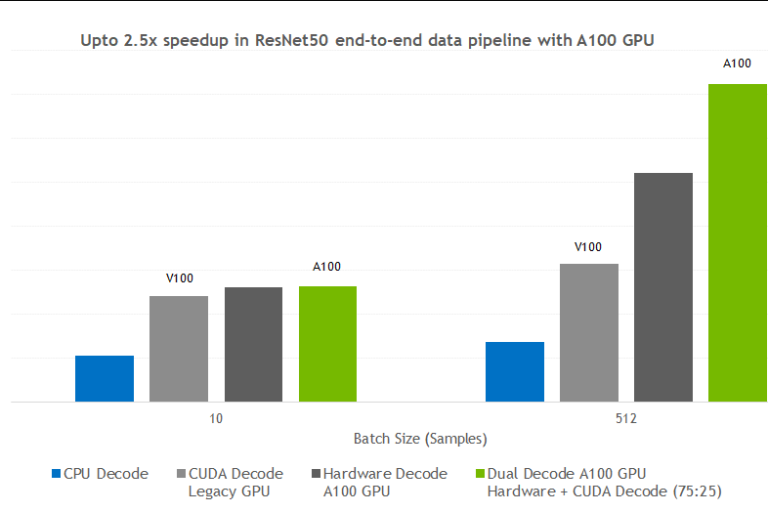

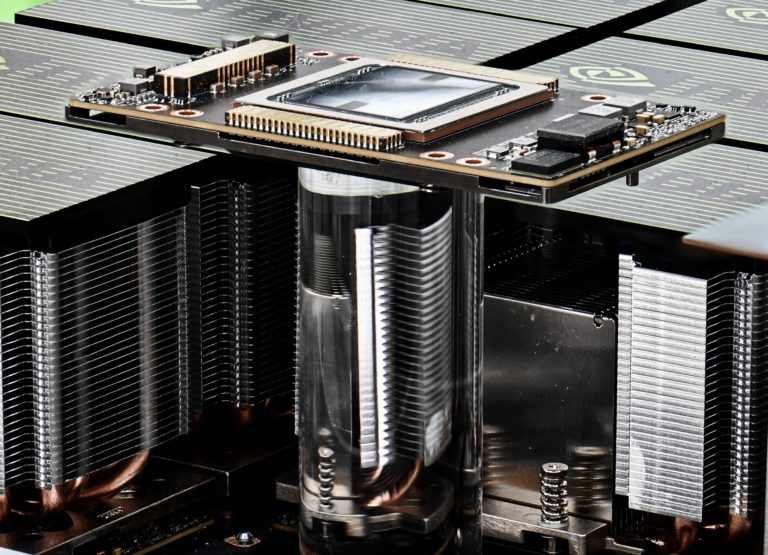

During the 2020 NVIDIA GPU Technology Conference keynote address, NVIDIA founder and CEO Jensen Huang introduced the new NVIDIA A100 GPU based on the NVIDIA Ampere GPU architecture. In this post, we detail the exciting new features of the A100 that make NVIDIA GPUs an ever-better powerhouse for computer vision workloads. We also showcase two recent CV research projects from NVIDIA Research��

]]>

Today, smartphones, the most popular device for taking pictures, can capture images as large as 4K UHD (3840��2160 image), more than 25 MB of raw data. Even considering the embarrassingly low HD resolution (1280��720), a raw image requires more than 2.5 MB of storage. Storing as few as 100 UHD images would require almost 3 GB of free space. Clearly, if you store data this way��

]]> ]]>

]]>

Let��s imagine a situation. You buy a brand-new, cutting-edge, Volta-powered DGX-2 server. You��ve done your math right, expecting a 2x performance increase in ResNet50 training over the DGX-1 you had before. You plug it into your rack cabinet and run the training. That��s when an unpleasant surprise pops up. Even though your math is correct, the speedup you��re getting lower than expected. Why?

]]>

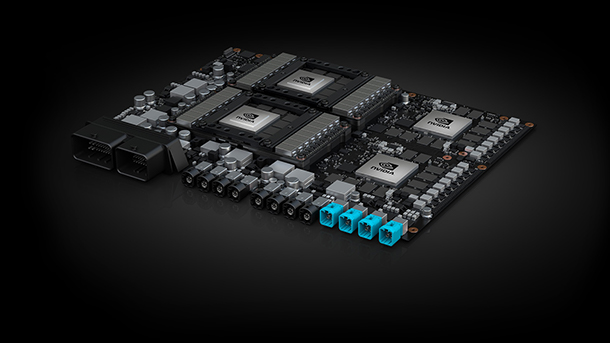

Autonomous vehicles require fast and accurate perception of the surrounding environment in order to accomplish a wide set of tasks concurrently in real time. Systems need to handle the detection of obstacles, determine the boundaries of lanes, intersection detection, and sign recognition among many more functions over a large variety of environments, conditions, and situations and do this work��

]]>

Earlier this year in March, we showed retinanet-examples, an open source example of how to accelerate the training and deployment of an object detection pipeline for GPUs. We presented the project at NVIDIA��s GPU Technology Conference in San Jose. This post discusses the motivation for this work, a high-level description of the architecture, and a brief look under-the-hood at the optimizations we��

]]>

Learn what��s new in the latest releases of cuDNN, CUDA, TensorRT, DALI, and Nsight Compute. The NVIDIA CUDA Deep Neural Network library (cuDNN) is a GPU-accelerated library of primitives for deep neural networks. This version of cuDNN includes: Download CUDA is the parallel computing platform and programming model for general-purpose computing on NVIDIA GPUs.

]]>

Editor��s Note: This post has been updated. Here is the revised post. Training deep learning models with vast amounts of data is necessary to achieve accurate results. Data in the wild, or even prepared data sets, is usually not in the form that can be directly fed into neural network. This is where NVIDIA DALI data preprocessing comes into play. There are various reasons for that��

]]>

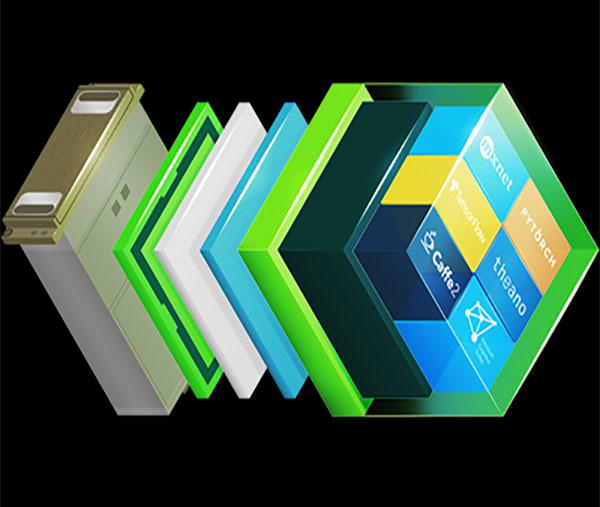

The pace of AI adoption across diverse industries depends on maximizing data scientists�� productivity. NVIDIA releases optimized NGC containers every month with improved performance for deep learning frameworks and libraries, helping scientists maximize their potential. NVIDIA continuously invests in the full data science stack, including GPU architecture, systems, and software stacks.

]]>